安装

cd /usr/local/ rz tar xf apache-flume-1.8.0-bin.tar.gz mv apache-flume-1.8.0-bin flume vim /etc/profile export FLUME_HOME=/usr/local/flume :$FLUME_HOME/bin source /etc/profile

修改配置

cd flume/conf/ vim flume-env.sh.template #22行取消注释改为 export JAVA_HOME=/usr/java #33行取消注释改为 FLUME_CLASSPATH="/usr/local/flume/conf" vim netcat-logger.conf a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 # Describe the sink a1.sinks.k1.type = logger # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

启动

flume-ng agent --conf conf --conf-file netcat-logger.conf --name a1 -Dflume.root.logger=INFO,console

新建窗口

telnet 127.0.0.1 44444

输入一个字符串,原窗口也显示该字符串

添加conf配置

vim tail-hdfs.conf # Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 #exec 指的是命令 # Describe/configure the source a1.sources.r1.type = exec #F根据文件名追中, f根据文件的nodeid追中 a1.sources.r1.command = tail -F /var/log.txt a1.sources.r1.channels = c1 # Describe the sink #下沉目标 a1.sinks.k1.type = hdfs a1.sinks.k1.channel = c1 #指定目录, flume帮做目的替换 a1.sinks.k1.hdfs.path = hdfs://hadoop:9000/flume/events/%y-%m-%d/%H%M/ #文件的命名, 前缀 a1.sinks.k1.hdfs.filePrefix = events- #10 分钟就改目录 a1.sinks.k1.hdfs.round = true a1.sinks.k1.hdfs.roundValue = 10 a1.sinks.k1.hdfs.roundUnit = minute #文件滚动之前的等待时间(秒) 一般是30秒 a1.sinks.k1.hdfs.rollInterval = 3 #文件滚动的大小限制(bytes) a1.sinks.k1.hdfs.rollSize = 0 ##################################################################### vim tail-hdfs.conf #写入多少个event数据后滚动文件(事件个数) a1.sinks.k1.hdfs.rollCount = 0 #在写入之前我存在ch里的事件数量 a1.sinks.k1.hdfs.batchSize = 10 #用本地时间格式化目录 a1.sinks.k1.hdfs.useLocalTimeStamp = true #下沉后, 生成的文件类型,默认是Sequencefile,可用DataStream,则为普通文本 a1.sin ks.k1.hdfs.fileType = DataStream # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

编写脚本

vim tail.sh

#!/bin/bash

for i in {1..1000}

do

echo "hello $i" >>/var/log.txt

sleep 2

done

####

sh tail.sh

新窗口启动

flume-ng agent --conf conf --conf-file tail-hdfs.conf --name a1 -Dflume.root.logger=INFO,console

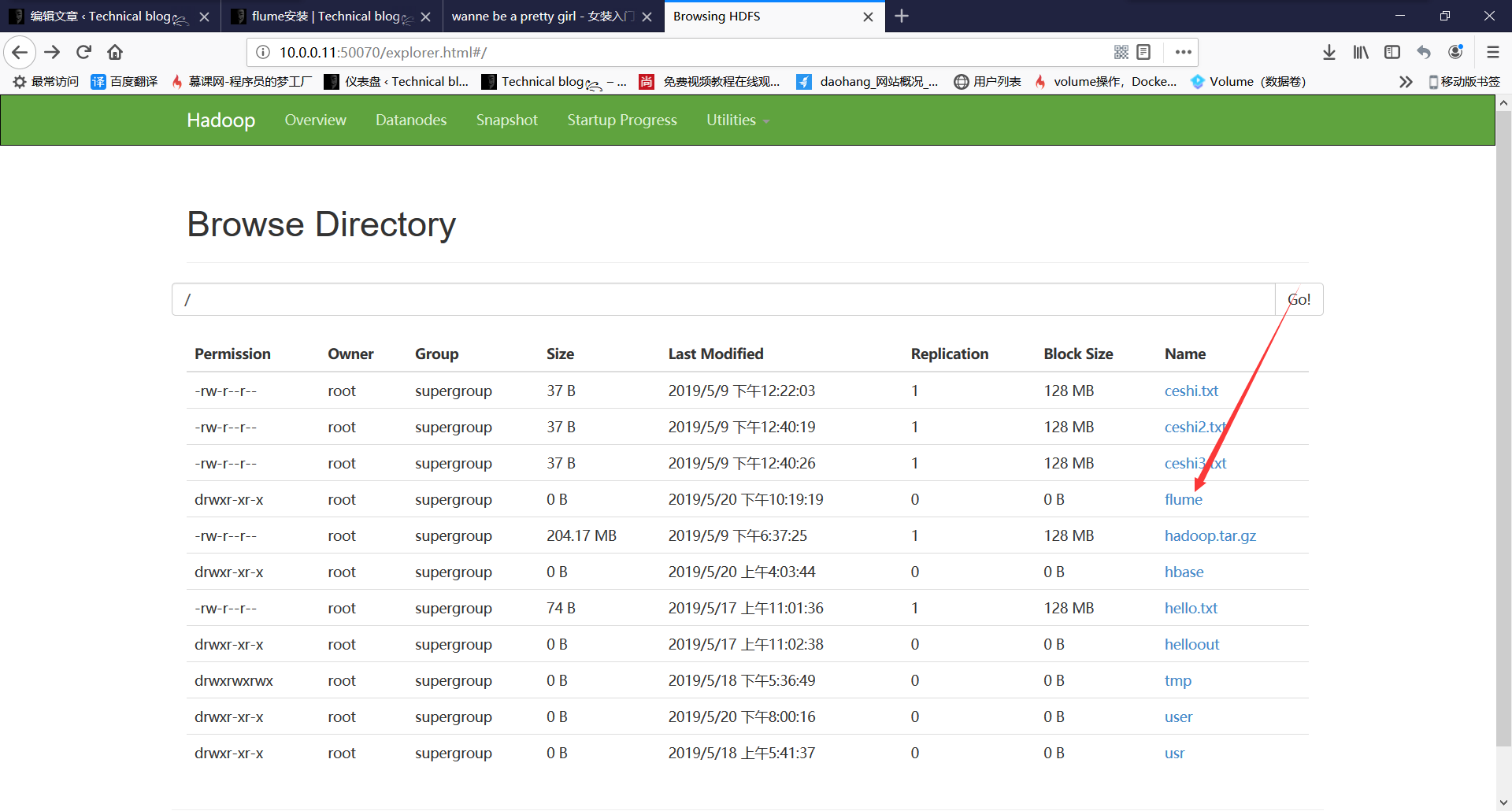

生成flume目录

点进去即可看到所有生成的数据